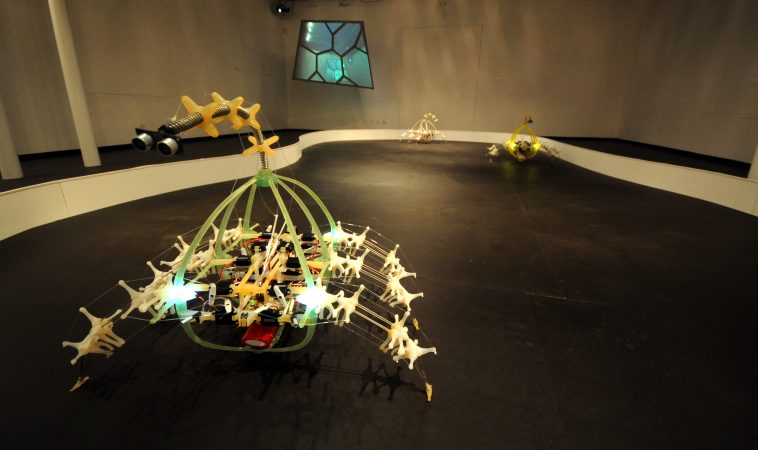

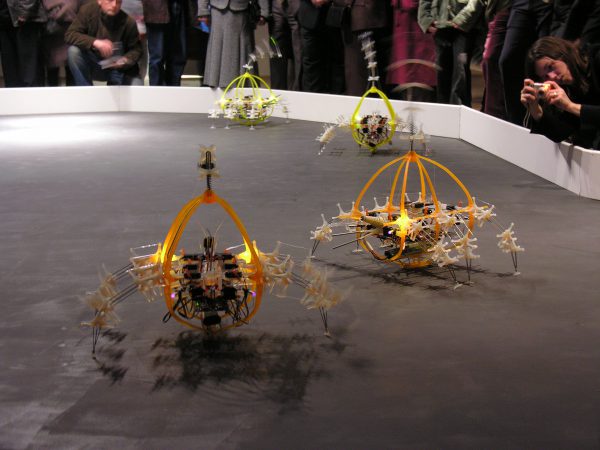

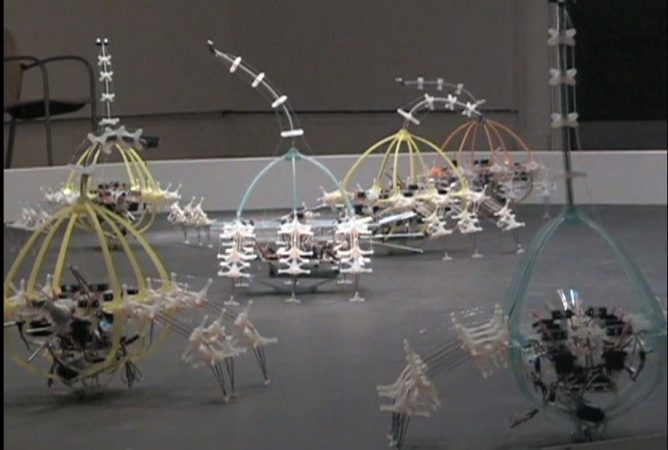

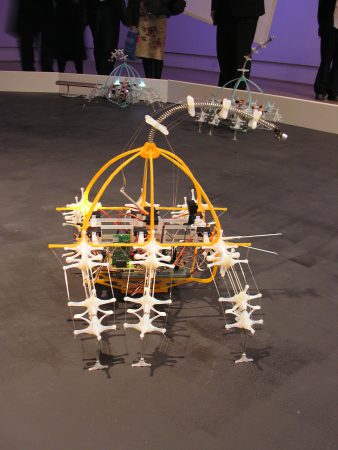

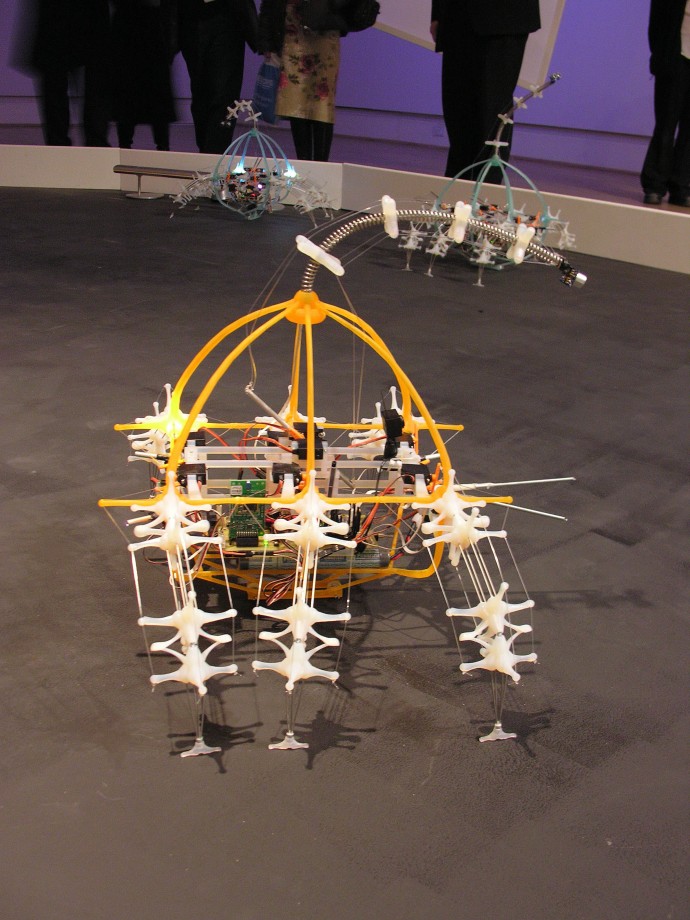

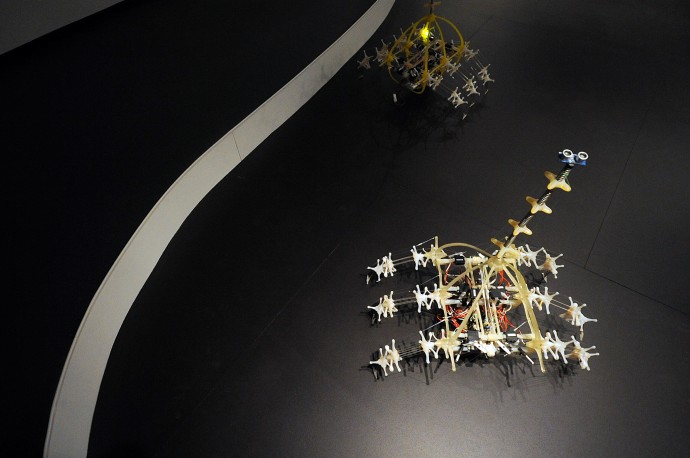

The Autotelematic Spider Bots by Ken Rinaldo and Matt Howard is an artificial-life, robotic installation consisting of 10 spider-like sculptures that interact with the public in real-time, self-modifying their behaviors based on their interactions with the viewer, themselves, their environment, and their food source. They were experiments in allowing a robot to emerge into energy autonomy by making it able to find its recharge stations.

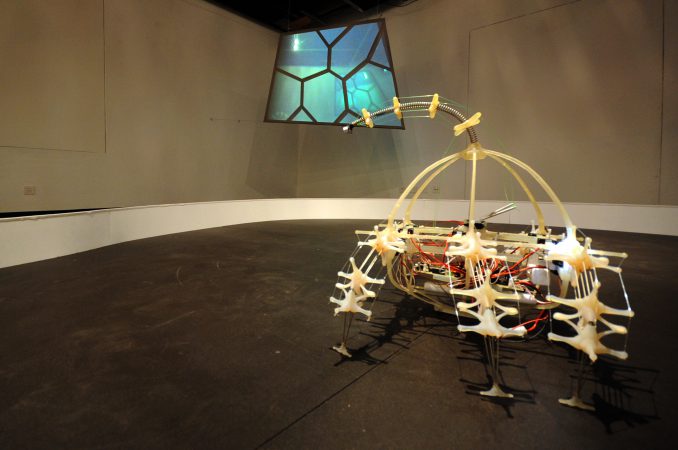

The spider bots see human participants in the installation with long-distance, ultrasonic eyes at the end of flexible, antennae-like necks. The robots can see a distance of 3-4 meters with ultrasonic eyes. The robots were designed to continually seek interaction by swinging their antennae-like necks back and forth. When they find people, their interactions trigger behavioral responses that manifest immediately and over time as the series evolves.

As real spiders have multiple eyes for long and short distances, these spider bots have new, shorter-distance infrared eyes, allowing them to see and avoid each other as they randomly forage for food.

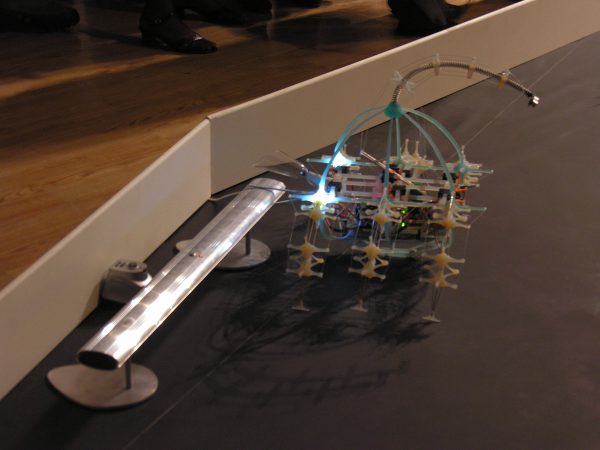

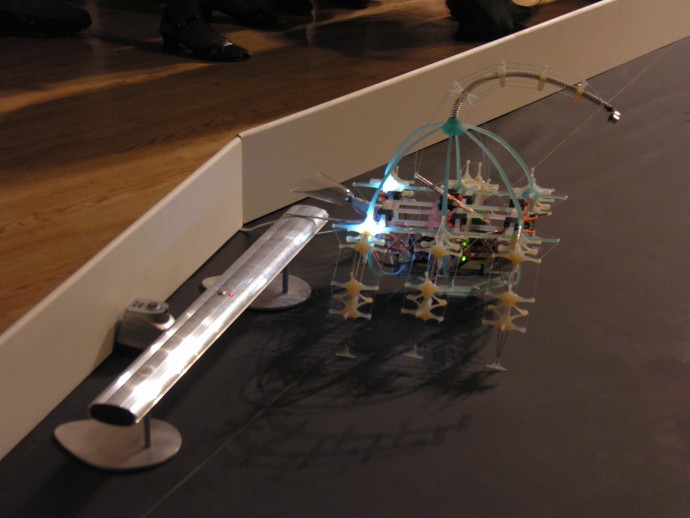

For the spider bots, food takes the form of a recharge station in the surrounding ring. The robots stay equidistant from each other and the walls in the spider ring by emitting coded infrared pulses from small aluminum tubes placed at their midsections and creating a kind of infrared apron around each spider bot.

When a spider’s infrared eye sees either a human or a fellow robot, super-bright LEDs illuminate the interior of its plastic structure as an indication of its presence.

The spider bots create a constantly blinking environment of LEDs, which indicate the robotic spiders’ internal processing and thinking states and allow humans to understand what they are aware of. The Autotelematic Spider Bots, 2006 premiered at The Sunderland Museum and Winter Gardens commissioned by the AV Festival England, curator Honor Harger.

The spiders could communicate through Bluetooth, allowing one central robot to coordinate their activity as they interacted. The robots were designed to find their food source through random foraging, looking for a 1 Hz infrared beacon that sat under a recharge rail and deactivated for the installation until battery recharge speed and research advanced.

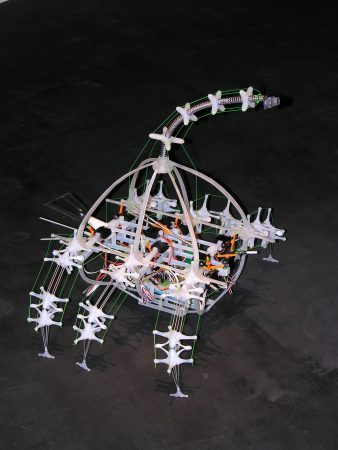

The successful creation of a series of robots that emerge into energy autonomy through inter-communication will have to wait for a significant improvement in battery technology. As cells evolve and allow quicker charging, I hope future versions exhibit this emergent behavior. In the studio, we successfully had one robot find and attach itself to the recharge station with springy chelicerae (pointed appendages that biological spiders use to grasp and pull food into their mouths) at the front of the robotic unit.

The robots also talk to each other and interact with audible chirping sounds from small, amplified speakers attached to their frames. The speakers amplify the twittering sound with human interaction. Viewers got a sense of the “emotional” response of the spider bots through the tone of messages passed to the viewers. Higher tones are associated with fear and repulsion, and lower tones with regular food (charging) foraging.

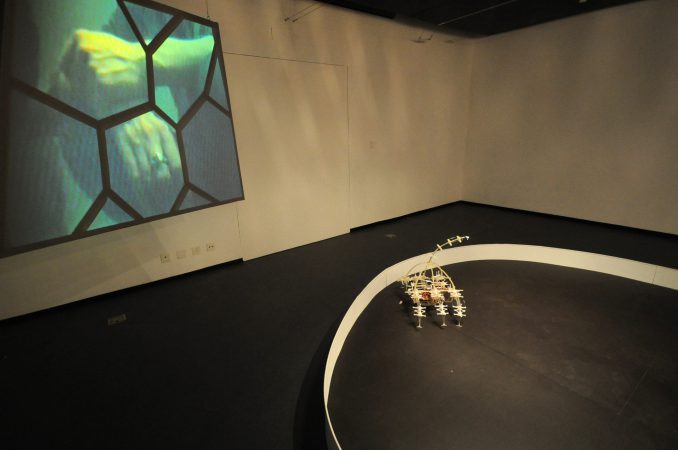

One of the robots has a mini video camera and transmitters to project its vision to the installation wall. This signal was projected onto a screen with built-in Voronoi-like patterns, giving viewers/participants a sense of being captured in the robot’s web. The screen also showed the spiders on a larger scale than the viewers, subtly manipulating the power structure of the human/robot relationship.

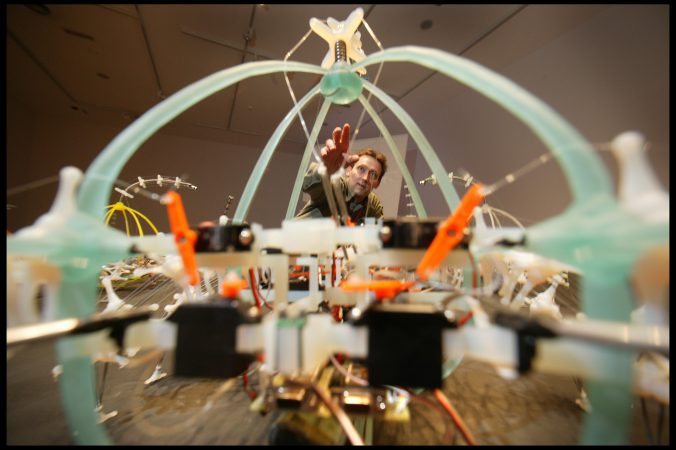

These robots also defined a whole new morphology of robot walking. The legs are based on a tension-compression structure and pull-string mechanics. Each set of two legs acts like a flexible arch held in compression by flexible plastics and monofilament or fishing line. When servo motors pull one of the lengths of the monofilament, the arc bends and allows the leg to move naturally at any speed. Although biological spiders have eight legs, six legs enable the robots to walk forward in a tripod gait and turn in either direction. This tripod gait simulates cockroaches and other six-legged insects.

The overall inspiration for the robots’ behavior came from a lecture by Dr. Guy Theraulaz. His research at the Centre National de la Recherche Scientifique in France reports that ants operate on rule-driven systems. With this in mind, it became apparent that computers and software, as rule-driven systems, could be structurally coupled with the robots’ organic structure, allowing them to function within and emerge from their environment.

The software for the robots is organized into a structure I call bio-sumption architecture, which allows individual behaviors to be subsumed for the group’s fitness. Food-finding is the robots’ primary conduct when they are hungry (low battery charge), and they will ignore human interaction.

The programming was a variation on the subsumption architectures of Rodney Brooks out of MIT.

The robots designed in the 3D program Cinema 4d allowed customization of motors and parts fitting to absolute accuracies. Rapid prototyping meant rapid evolution and construction of this complex morphology. The final spider bots were output into rapid prototyping plastics, allowing quick testing of the plastics’ stiffness, flexibility, and translucency. The central bodies cast in colored acrylic from an original early prototype held motors and electronics.

Models out of semi-clear polyurethane plastic are impregnated with different Pantone colors to give each robot an individual quality. In essence, robots gave birth to other robots.

The architecture of the hardware and sensor integration with the physical structure proceeded with distributing as much of the robot’s intelligence as possible to the integrated intelligent sensors and motor controllers.

For example, the servo motor controller can function like an autonomic nervous system, receiving and sending walking commands without tying up individual processors. Quick processing and rapid sensor activation reduced overall processor overhead.

The robots’ brains are two embedded microcontrollers, with a left- and right-hemisphere approach to parallel processing and a four-wire corpus callosum between the two hemispheres. The Autotelematic Spider Bots installation is an artificial-life chimera. A robotic spider, walking like a cockroach and insect, seeing like a bat, and twittering with the voice of an electronic bird.

The spider bots are set to evolve further as I work on this process with students and robotics researchers at Ecole Polytechnique Federale de Lausanne in Switzerland.

BIBLIOGRAPHY

Books

Art and Science: How Scientific Research and Technological Innovation are becoming key to 21st Century Aesthetics, Thames and Hudson, by Steven Wilson, 162, 111, 129 2010

DO ROBOTS DREAM OF SPRING? – The Art of Ken Rinaldo at the Maison d’Ailleurs. A retrospective catalog has a forward by Science Fiction writer Bruce Sterling and curator Patrick Gyger. Yverdon-Les-Bains: September 19, 2010, to March 20, 2011

Reviews

Do Robots Dream of Spring? Ken Rinaldo exhibit at the Swiss Museum of Science Fiction September 21, 2010, by Nicolas Nova.

Exhibitions:

LA MAISON d’AILLEURS, MUSEUM OF SCIENCE FICTION Yverdon-Les-Bains, Switzerland, Sept-Mar 2010-11

UTOPIA & EXTRAORDINARY JOURNEYS

Premiere of Enteric Consciousness and the Pheromone Robot Stories animation; commissions, Autopoiesis, Spider Bots, Paparazzi Bots, Augmented Fish Reality, Machinic Diatoms & Our Daily Dread, invited by Director Patrick Gyger

CONTEMPORARY ART CENTER WINZAVOD Moscow, Russia, March –April 2009

Artist documentary about The Autotelematic Spider Bots and The Augmented Fish Reality. Invited by curator Dmitry Bulatov.

NATIONAL CENTRE FOR CONTEMPORARY ARTS Kaliningrad, Russia, July 2008

Evolution Haute Couture: Art and Science in the Post-Biological Age Presenting Artist documentary about The Autotelematic Spider Bots and The Augmented Fish Reality. Invited by Dmitry Bulatov.

PURDUE UNIVERSITY GALLERIES West Lafayette, Indiana. Mar 3 –Apr. 20, 2008

The Autotelematic Spider Bots, Augmented Fish Reality, Our Daily Dread, Farm Fountain, and Machinic Diatoms. Invited by curator Craig Martin. Catalog produced.

RAPID 2007; CONFERENCE AND EXPOSITION Detroit, Michigan, May 1-3 2007

TheAutotelematic Spider Bot 2006.

HOPKINS GALLERY, THE OHIO STATE UNIVERSITY, Columbus, Ohio. Oct. 17-Nov. 3 2006

Autotelematic Spider Bots process exhibition. Curator Prudence Gill

MUSKINGUM COLLEGE CALDWELL HALL Muskingum, Ohio. Apr. 2006

Augmented Fish Reality & AutoTelematic Spider Bots curated by Ken McCollum. Invited.

SUNDERLAND MUSEUM AND WINTER GARDENS AV FESTIVAL Sunderland, England. Mar. 2006

The worldwide premiere of Autotelematic Spider Bots with Matt Howard was curated and commissioned by Honor Harger.

HOPKINS HALL GALLERY Nov 6-13, Columbus Ohio, 2023

Curator and Participant in the Synthetic Sublime Exhibition of current and emeritus Art and Technology faculty, Graduate Students, and staff in the Department of Art at The Ohio State University.